Appendix B — The impact of assumption violations on test results

Although we have discussed checking for bias in our models, you will likely have noticed that assumption tests are very rarely reported in the psychological literature, which could lead you to question the influence that assumption violations have on inference.

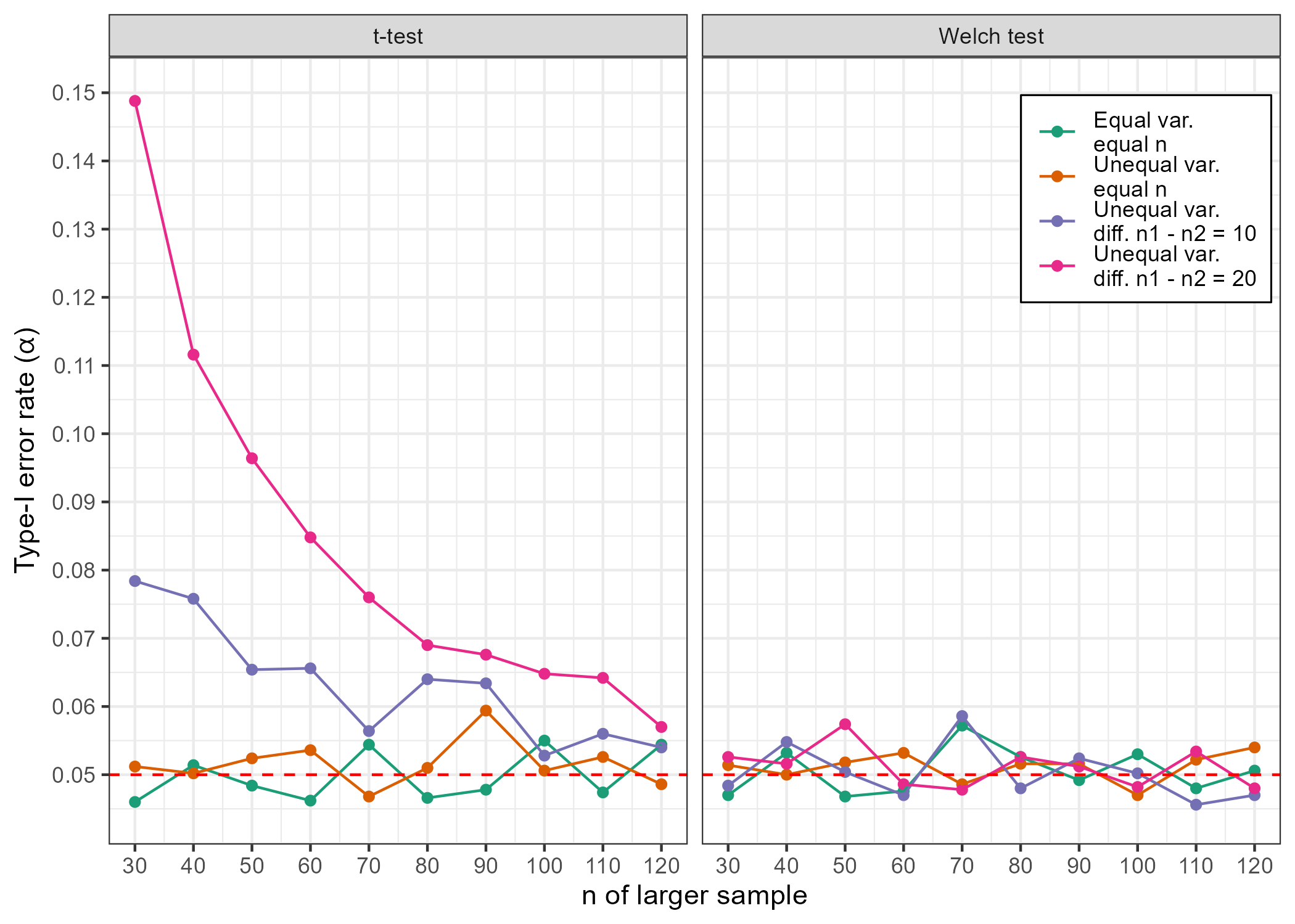

Normally, we do not know whether \(H_0\) or \(H_1\) is true, but simulations allow us to fix “population” parameters and then draw samples to investigate how robust our hypothesis tests are against assumption violations. For example, in the case of an independent samples t-test we can look at the long-run type-I error rate (\(\alpha\)) of the test by repeatedly drawing samples from a simulated population where the true mean difference - \(\mu_{group 1}\) - \(\mu_{group 1}\) - is equal to 0 and calculating the proportion of significant test results. Simulating in this way under conditions where test assumptions are met and comparing the results with simulations generated in the presence of assumption violations can give us information on the impact of these violations.

B.1 Homogeneity of variances

Although Field (Field et al., 2025) discusses how violations of the assumption of normality may have only trivial impacts in large enough samples, he also mentions problems associated with heteroscedasticity, particularly when group sizes differ. To highlight this, I present results from simulations where, on each simulation iteration, I drew two virtual groups of participants from a normal distribution with \(\mu\) = 0 under four conditions:

- Equal variances and sample sizes: \(\sigma\) = 1

- Unequal variances and equal sample sizes: \(\sigma\) group 1 = 1, \(\sigma\) group 2 = 2

- Unequal variances and unequal sample sizes: \(\sigma\) group 1 = 1, \(\sigma\) group 2 = 2; difference between \(n\) group 1 and \(n\) group 2 = 10

- Unequal variances and unequal sample sizes: \(\sigma\) group 1 = 1, \(\sigma\) group 2 = 2; difference between \(n\) group 1 and \(n\) group 2 = 20

In each of these four conditions, I iterated through sample sizes from \(n\) group 1 = 30 - \(n\) group 1 = 120, in steps of 10. For each of these sample size iterations, I ran 5,000 independent-samples t-tests and 5,000 Welch tests for each of the conditions presented above and saved the proportion of significant test results, assuming the typical \(\alpha\) = .05. The results are shown below.

We can see that the conventional independent-samples t-tests fare well when sample sizes are equal, but we see type-I error rates in excess of .05 when both variances and sample sizes are unequal across groups, particularly in smaller samples. In contrast, the Welch test (which does not assume equal variances) appears robust to sample size and variance inequalities, returning ~5% false-positives under all conditions.

B.2 Conclusion

Hopefully this limited demonstration illustrates some of the problems that can arise from violations of testing assumptions. In situations where homogeneity of variances cannot be confirmed, it would be wise to use adjustments or alternatives to standard tests, such as the Welch statistics for independent-samples t-tests and one-way ANOVA.